01 About

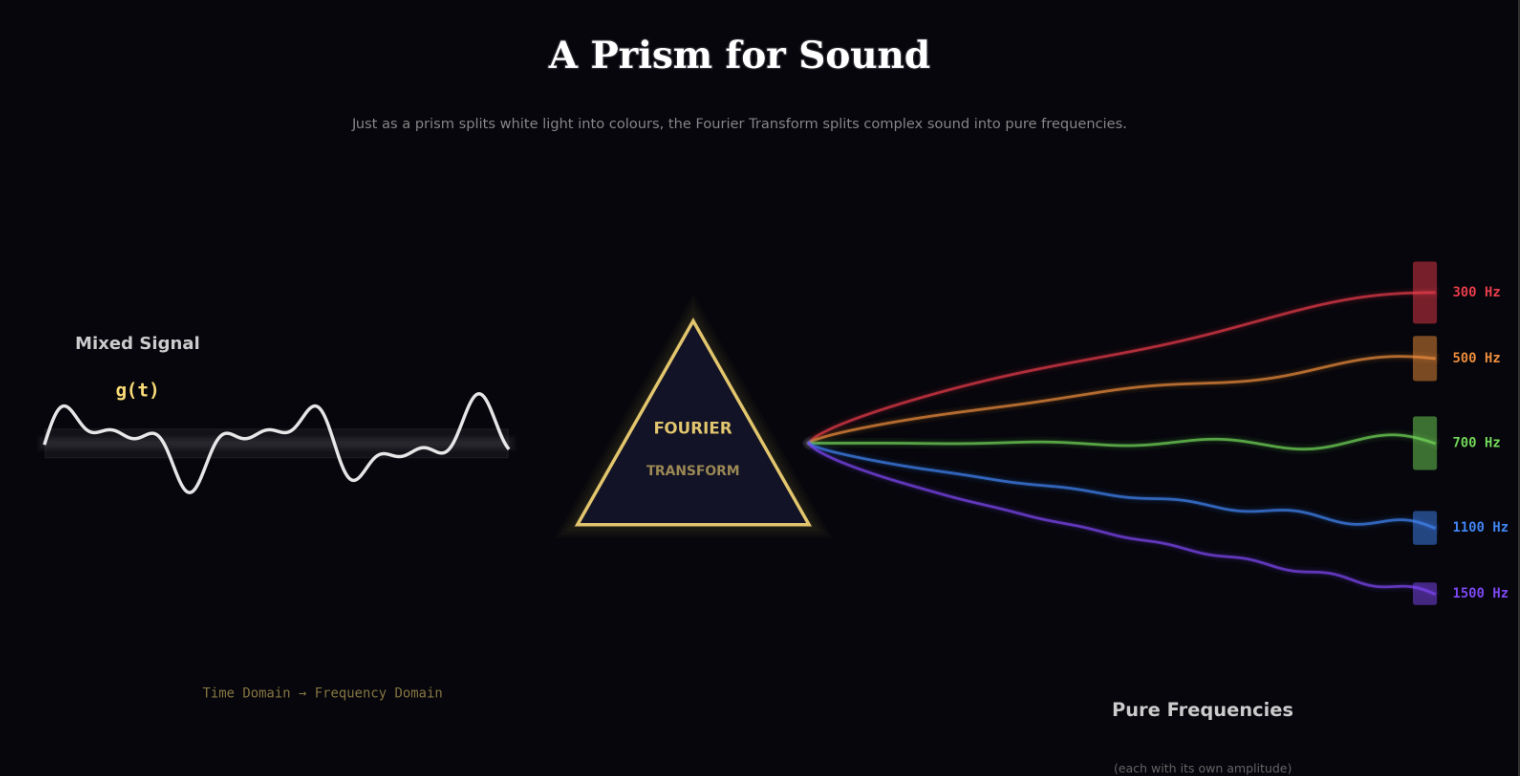

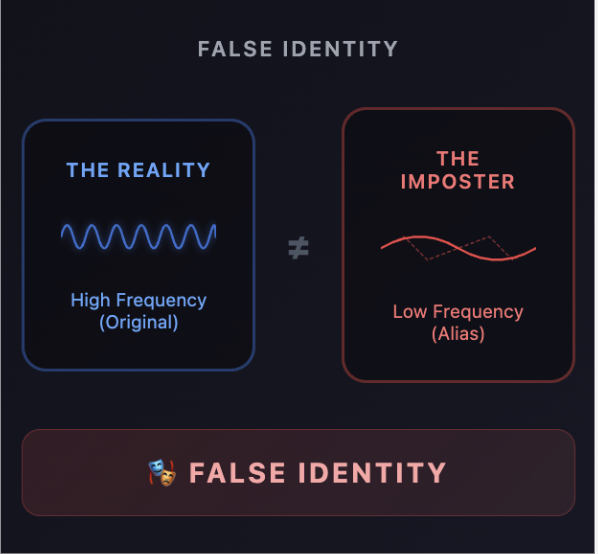

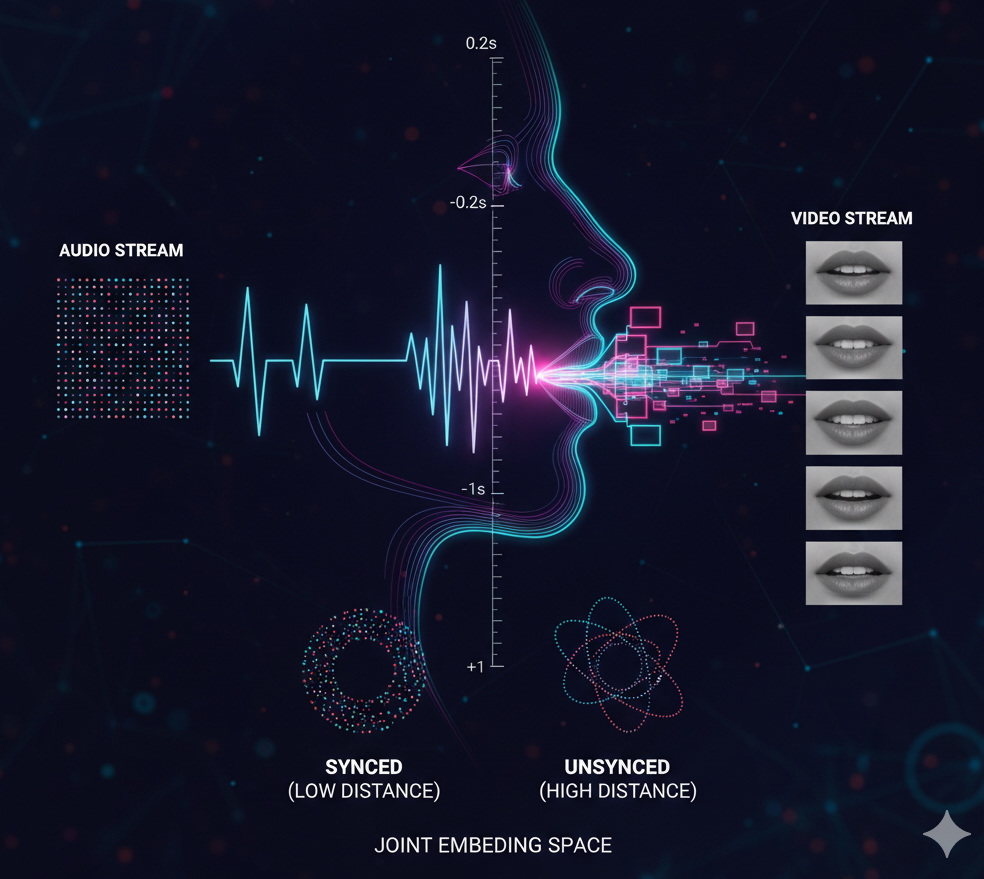

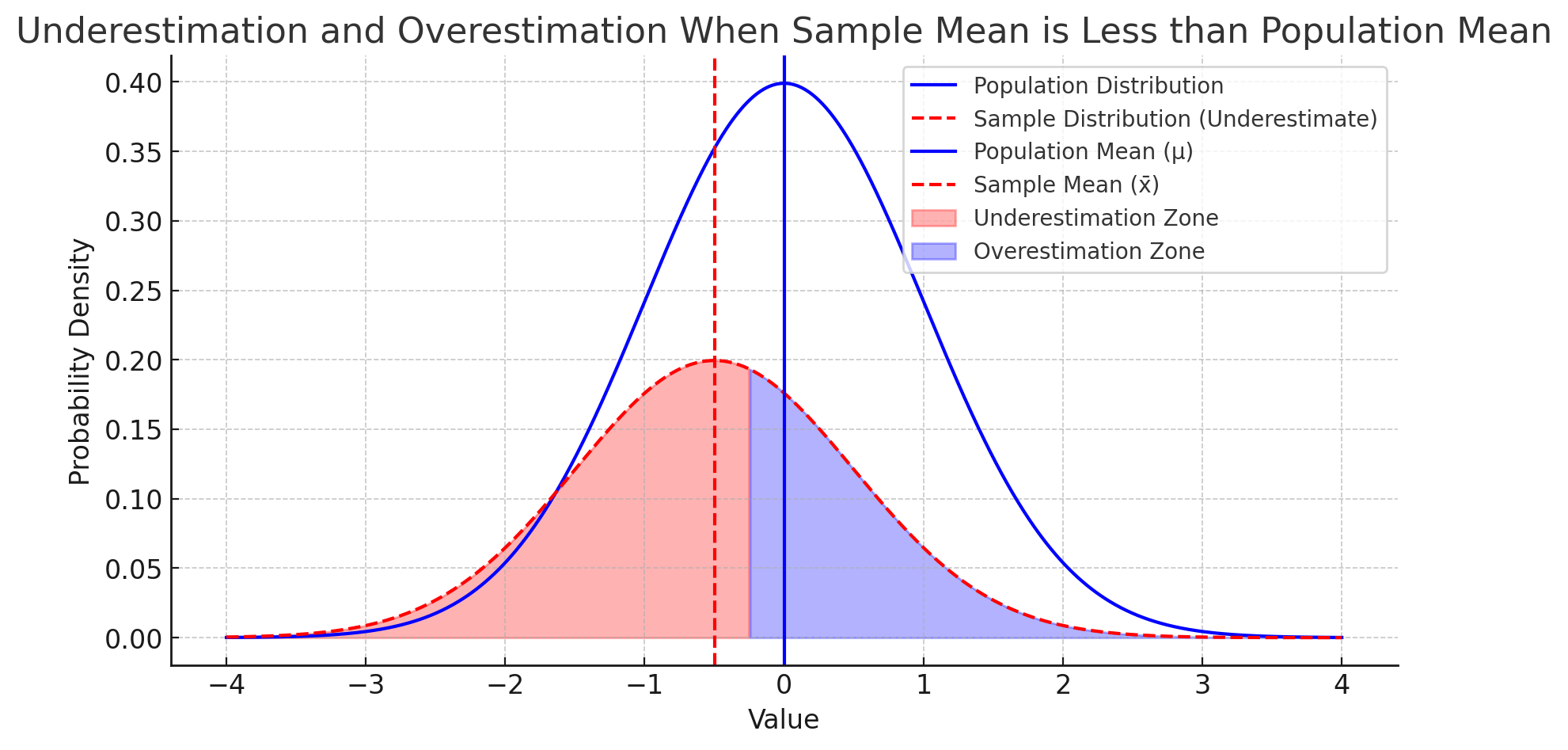

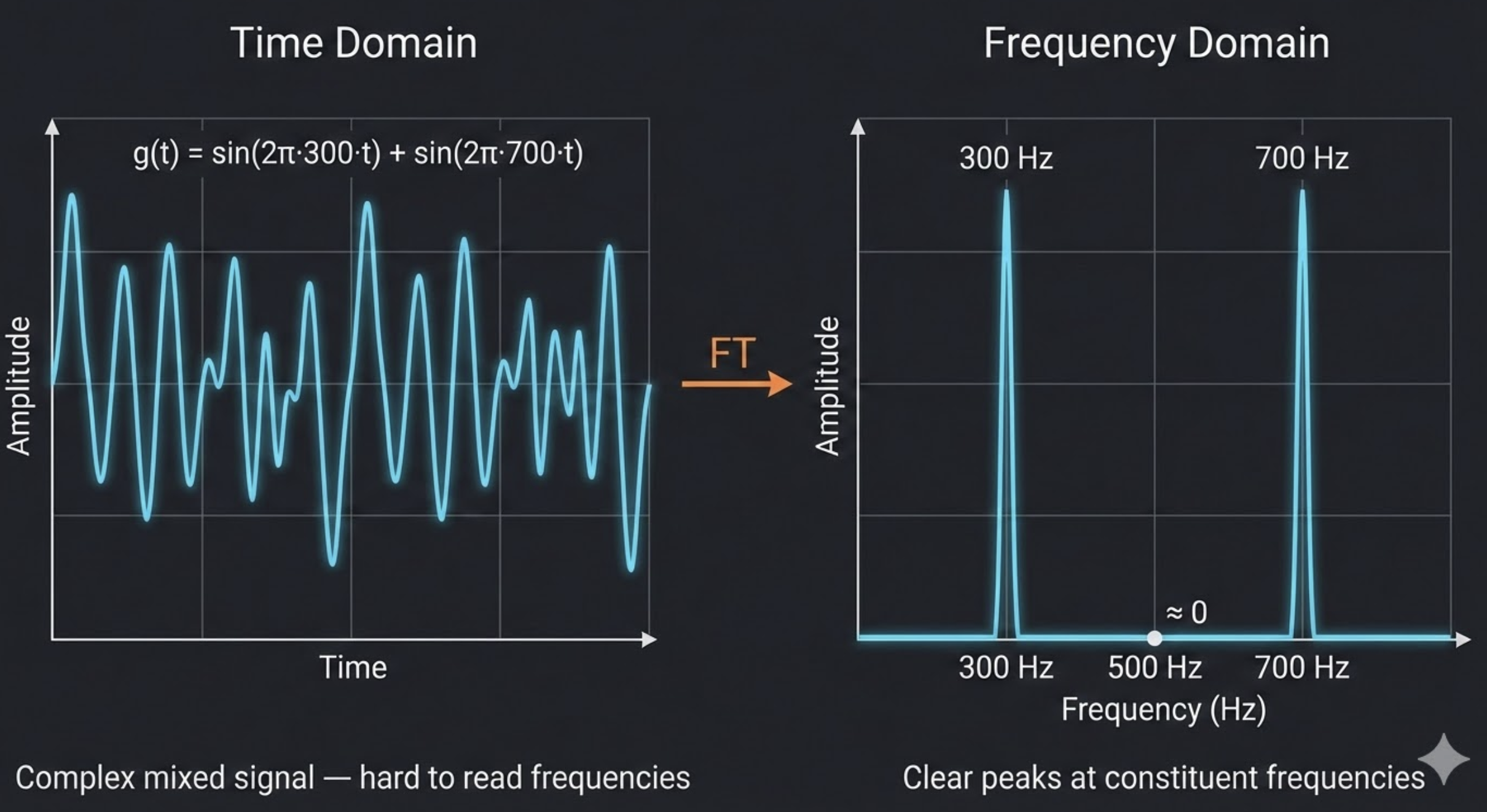

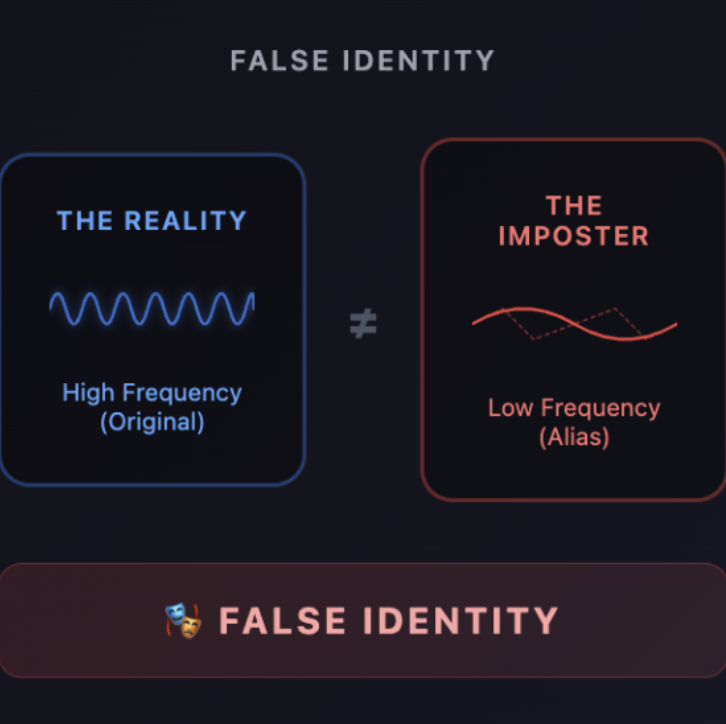

Research Scientist at Invideo with hands-on experience in audio signal processing, speech enhancement, and large-scale distributed training. Built and pre-trained GenHencer from scratch, achieving strong quantitative and perceptual results on industry benchmarks.

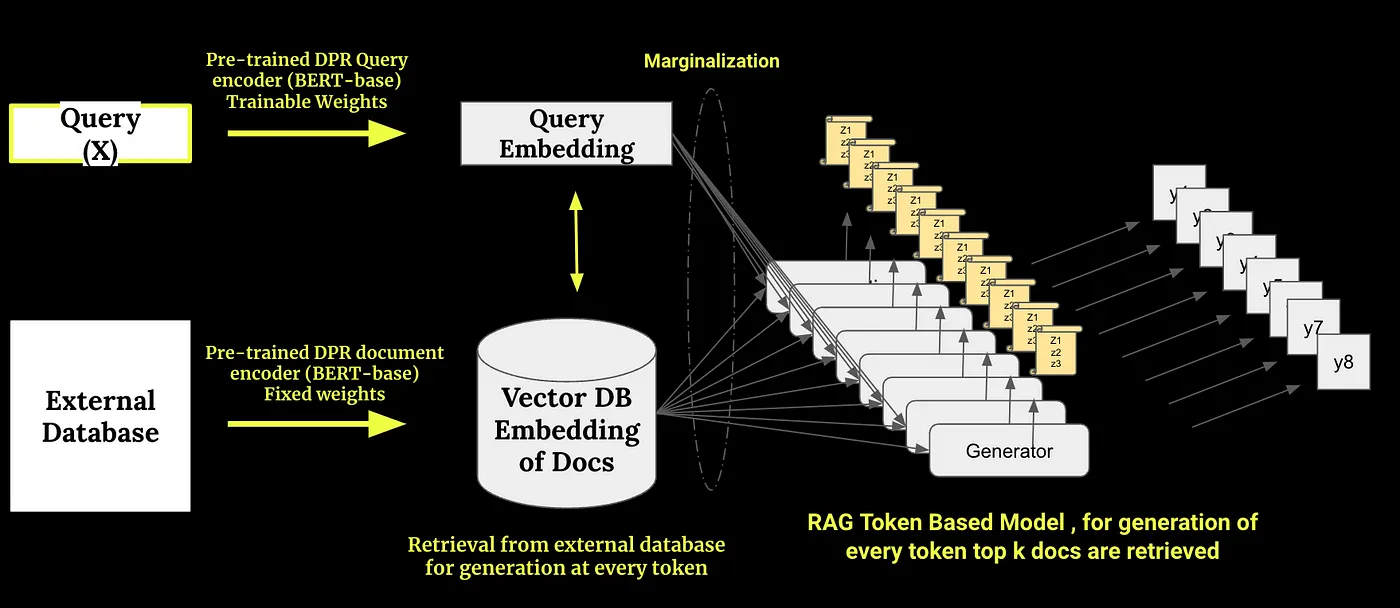

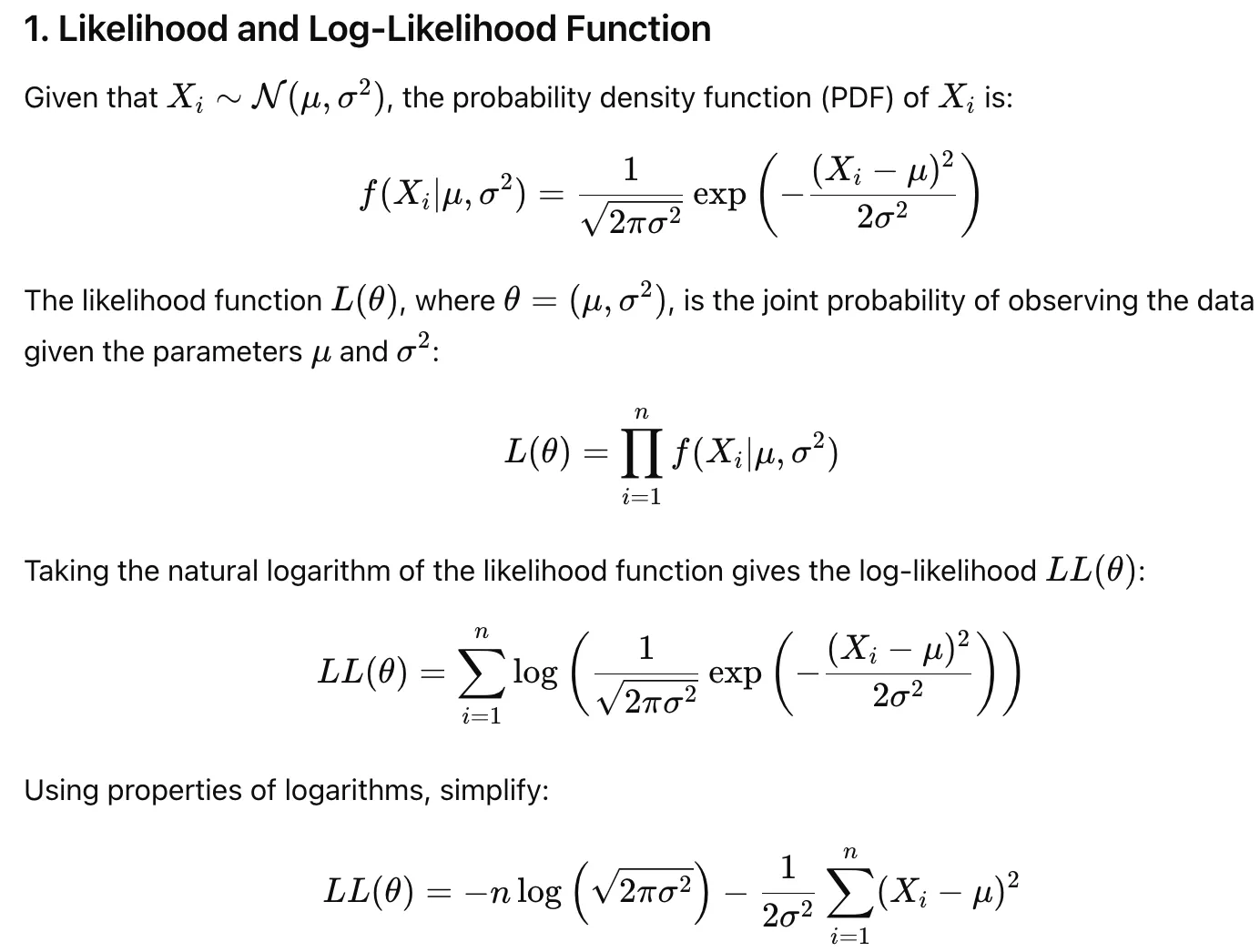

Deeply interested in the latest developments in ASR (Whisper, Wav2Vec2, HuBERT) and TTS systems. Strong foundation in mathematics, ML, and Generative AI with in-depth knowledge of LLMs. Published 4 articles in Towards Data Science with 25,000+ views.

Indian Institute of Technology Roorkee

B.Tech · JEE Advanced AIR 6851 · 2021 – 2025